CVPR2024

Lodge: A Coarse to Fine Diffusion Network for Long Dance Generation Guided by the Characteristic Dance Primitives

Abstract

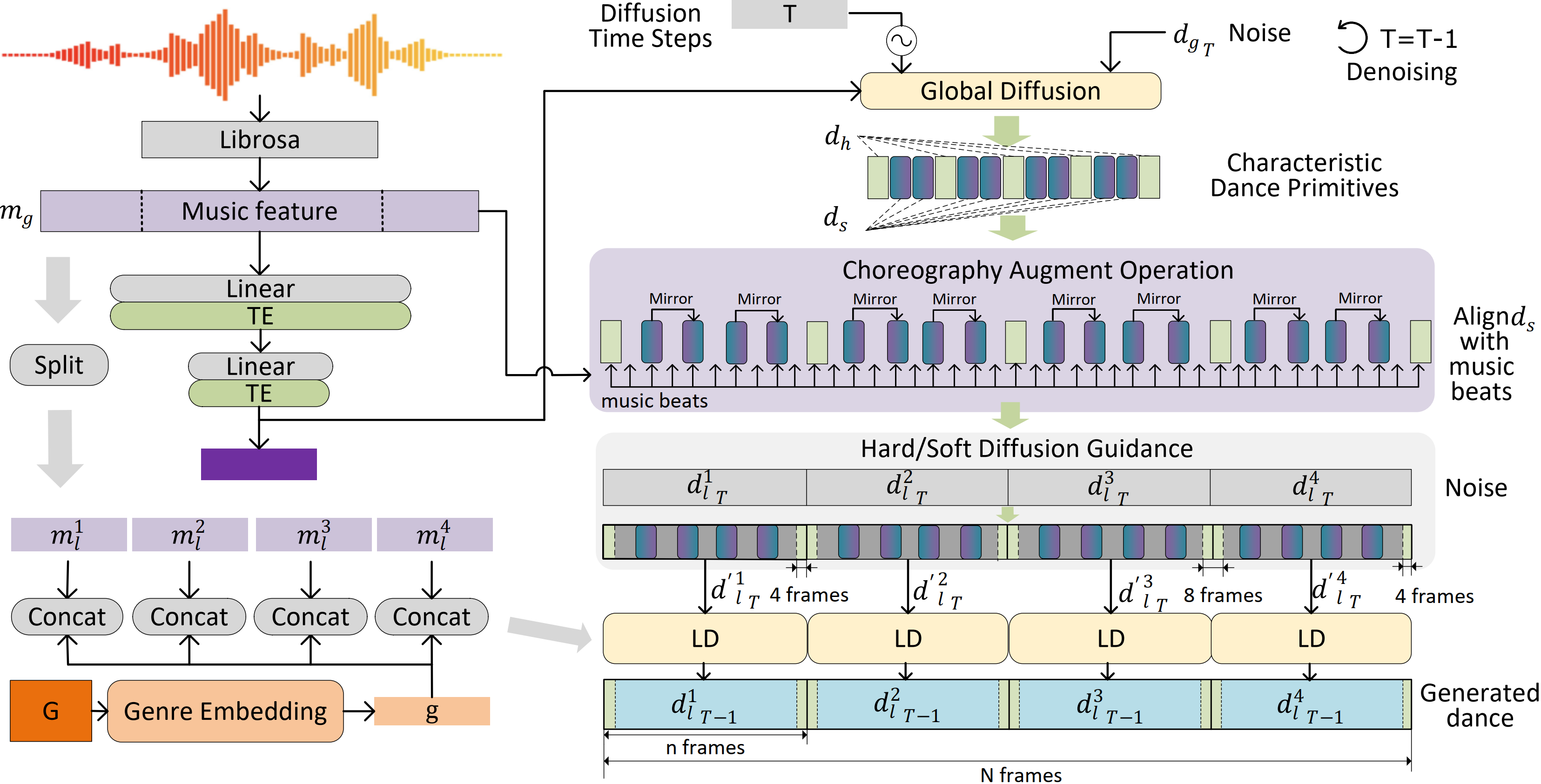

We propose Lodge, a network capable of generating extremely long dance sequences conditioned on given music. We design Lodge as a two-stage coarse to fine diffusion architecture, and propose the characteristic dance primitives that possess significant expressiveness as intermediate representations between two diffusion models. The first stage is global diffusion, which focuses on comprehending the coarse-level music-dance correlation and production characteristic dance primitives. In contrast, the second-stage is the local diffusion, which parallelly generates detailed motion sequences under the guidance of the dance primitives and choreographic rules. In addition, we propose a Foot Refine Block to optimize the contact between the feet and the ground, enhancing the physical realism of the motion. Our approach can parallelly generate dance sequences of extremely long length, striking a balance between global choreographic patterns and local motion quality and expressiveness. Extensive experiments validate the efficacy of our method.

Method

In order to simultaneously consider both the global choreographic rules and the local dance details, we design a coarse to fine diffusion network with twostages. The first stage is the global diffusion, which uses the global music feature to learn the choreography patterns and produce characteristic danceprimitives. The dance primitives are expressive key motions with a higher motion kinematic energy. The second stage is the Local Diffusion (LD), which focuses onthe quality of short-duration dance generation and can be run in parallel to improve the generation efficiency.

Results

As shown in the following videos (Please unmute for music), Lodge can generates long dances from given music.